Validation Dataset

For the validation dataset 30 random CXR images were obtained from the US Department of Veterans Affairs (VA) PACS (picture archiving and communication system). This dataset included 10 CXR images from hospitalized patients with COVID-19, 10 CXR pneumonia images from patients without COVID-19, and 10 normal CXRs. COVID-19 diagnoses were confirmed with a positive test result from the Xpert Xpress SARS-CoV-2 polymerase chain reaction (PCR) platform. 18

Microsoft Custom

Vision Microsoft CustomVision is an automated image classification and object detection system that is a part of Microsoft Azure Cognitive Services (azure.microsoft.com). It has a pay-as-you-go model with fees depending on the computing needs and usage. It offers a free trial to users for 2 initial projects. The service is online with an easy-to-follow graphical user interface. No coding skills are necessary.

We created a new classification project in CustomVision and chose a compact general domain for small size and easy export to TensorFlow. js model format. TensorFlow.js is a JavaScript library that enables dynamic download and execution of ML models. After the project was created, we proceeded to upload our image dataset. Each class was uploaded separately and tagged with the appropriate label (covid pneumonia, non-covid pneumonia, or normal lung). The system rejected 16 COVID-19 images as duplicates. The final CustomVision training dataset consisted of 484 images of COVID-19 pneumonia, 500 images of non-COVID-19 pneumonia, and 500 images of normal lungs. Once uploaded, CustomVision self-trains using the dataset upon initiating the program (Figure 1).

Website Creation

CustomVision was used to train the model. It can be used to execute the model continuously, or the model can be compacted and decoupled from CustomVision. In this case, the model was compacted and decoupled for use in an online application. An Angular online application was created with TensorFlow.js. Within a user’s web browser, the model is executed when an image of a CXR is submitted. Confidence values for each classification are returned. In this design, after the initial webpage and model is downloaded, the webpage no longer needs to access any server components and performs all operations in the browser. Although the solution works well on mobile phone browsers and in low bandwidth situations, the quality of predictions may depend on the browser and device used. At no time does an image get submitted to the cloud.

Result

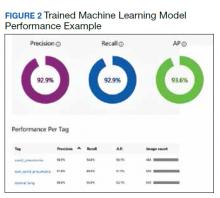

Overall, our trained model showed 92.9% precision and recall. Precision and recall results for each label were 98.9% and 94.8%, respectively for COVID-19 pneumonia; 91.8% and 89%, respectively, for non- COVID-19 pneumonia; and 88.8% and 95%, respectively, for normal lung (Figure 2). Next, we proceeded to validate the training model on the VA data by making individual predictions on 30 images from the VA dataset. Our model performed well with 100% sensitivity (recall), 95% specificity, 97% accuracy, 91% positive predictive value (precision), and 100% negative predictive value (Table).

Discussion

We successfully demonstrated the potential of using AI algorithms in assessing CXRs for COVID-19. We first trained the CustomVision automated image classification and object detection system to differentiate cases of COVID-19 from pneumonia from other etiologies as well as normal lung CXRs. We then tested our model against known patients from the James A. Haley Veterans’ Hospital in Tampa, Florida. The program achieved 100% sensitivity (recall), 95% specificity, 97% accuracy, 91% positive predictive value (precision), and 100% negative predictive value in differentiating the 3 scenarios. Using the trained ML model, we proceeded to create a website that could augment COVID-19 CXR diagnosis. 19 The website works on mobile as well as desktop platforms. A health care provider can take a CXR photo with a mobile phone or upload the image file. The ML algorithm would provide the probability of COVID-19 pneumonia, non-COVID-19 pneumonia, or normal lung diagnosis (Figure 3).